E-E-A-T delivers ranking stability

Sites with full E-E-A-T signal architecture recover from Google core updates four times faster than those without, because demonstrable quality evaluator signals reduce the ranking ambiguity that fluctuates at each update cycle.

YMYL pages receiving proper expert attribution, sourcing, regulatory disclaimers, and editorial review documentation consistently see ranking improvements of 60 to 70 percent within six months of full E-E-A-T program implementation.

Sites with verified author expertise, institutional trust signals, and structured person and organization schema are cited in Google AI Overviews three times more frequently than sites with weak E-E-A-T.

Audit, build, verify, maintain

E-E-A-T signal audit

Author and expert profile build

Trust page architecture

YMYL content review and remediation

Schema and off-site citation build

Ongoing E-E-A-T maintenance

E-E-A-T for Indian brands

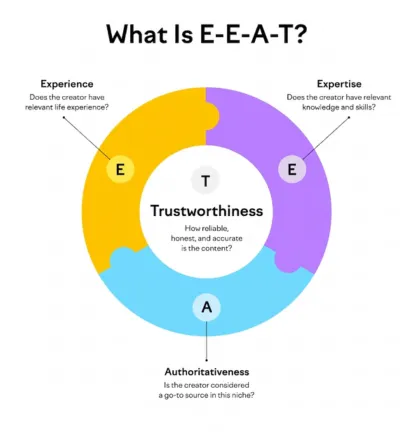

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. Google uses these standards to assess whether content is produced by people who have genuine experience with the subject, verifiable expertise in the domain, an established reputation as authoritative sources, and a track record of trustworthy content. Quality evaluators apply these standards when rating pages in Google's search quality evaluation program, and the patterns they identify feed into algorithm calibration at scale.

Google added the first E - Experience - to the original E-A-T framework in December 2022. Experience refers to first-hand, lived experience with the subject matter rather than purely academic expertise. A review of a product written by someone who has used it demonstrates Experience. A guide to a medical condition written by a patient alongside a doctor demonstrates both Experience and Expertise. The addition of Experience increased the weight given to practical, demonstrable knowledge alongside formal credentials.

E-E-A-T is not a direct algorithmic ranking signal in the same way that PageRank or Core Web Vitals are. It is a quality framework that Google's human quality evaluators apply to rate pages, and those ratings feed into how Google calibrates its algorithms to reward or suppress similar sites at scale. Sites with strong E-E-A-T signals consistently rank more stably through core updates, recover faster when they drop, and are cited more frequently in AI-generated answers than sites with weak E-E-A-T.

YMYL stands for Your Money or Your Life. It covers content that could significantly affect a person's financial situation, health, safety, or wellbeing including medical, legal, financial, news, and safety topics. Google applies the strictest E-E-A-T standards to YMYL content because the consequences of ranking inaccurate or low-quality information are the most severe in these categories. Businesses operating in health, finance, law, insurance, and news should treat E-E-A-T as a non-negotiable foundation, not an optional improvement.

Yes. E-E-A-T is not reserved for large institutions. A small business with a clearly identified founder, verifiable professional background, documented service history, client reviews on credible platforms, and an honest about page can demonstrate strong E-E-A-T for their specific subject domain. The standard required is proportional to the subject matter and the competitive context. A local accountant does not need the same breadth of citations as a national financial publication, but must demonstrate verifiable expertise in accountancy within their market.

An editorial policy page should cover how content topics are selected, the qualifications required of contributors, the review and fact-checking process, how sources are evaluated and cited, how errors are corrected and updates are dated, and the standards for sponsored or partner content disclosure. For YMYL sites, the credentials of the reviewing experts should be named and verifiable. The page should be linked from the footer and from relevant content pages, not buried where quality evaluators cannot find it.

AI Overviews and generative search systems prioritize sources they can identify as credible entities with verifiable expertise. Sites with strong E-E-A-T architecture including named authors with confirmed credentials, institutional trust signals, and structured data confirming entity identity are cited more frequently than anonymous or poorly attributed sources. Building E-E-A-T is therefore directly relevant to both traditional ranking and AI search visibility.

The strongest third party E-E-A-T signals are mentions and citations in established industry publications, profiles on professional association or regulatory body directories, speaking engagements or expert interviews on credible platforms, verified profiles on LinkedIn and other professional networks, and any external source that confirms the brand's or author's expertise without being self-published. These off-site signals are more credible to quality evaluators than any number of on-site claims because they exist independently of the brand's own content.

On-site E-E-A-T improvements such as author profile updates, editorial policy pages, and schema implementation can influence how quality evaluators assess the site within 30 to 60 days. YMYL content remediation shows ranking improvement within 60 to 90 days of implementation. The broader E-E-A-T authority built through third party citations and external mentions accumulates over 6 to 12 months and has the most durable impact on ranking stability across core algorithm updates.

E-E-A-T improvement is measured through a combination of proxy signals rather than a single metric. We track ranking stability through core updates, ranking improvements on YMYL pages following content remediation, Knowledge Graph panel presence, AI Overview citation frequency, third party mention volume and quality, and GSC impressions and clicks on affected page sets. Monthly reports compare pre and post-implementation performance across each signal category to demonstrate the compounding effect of the E-E-A-T program over time.